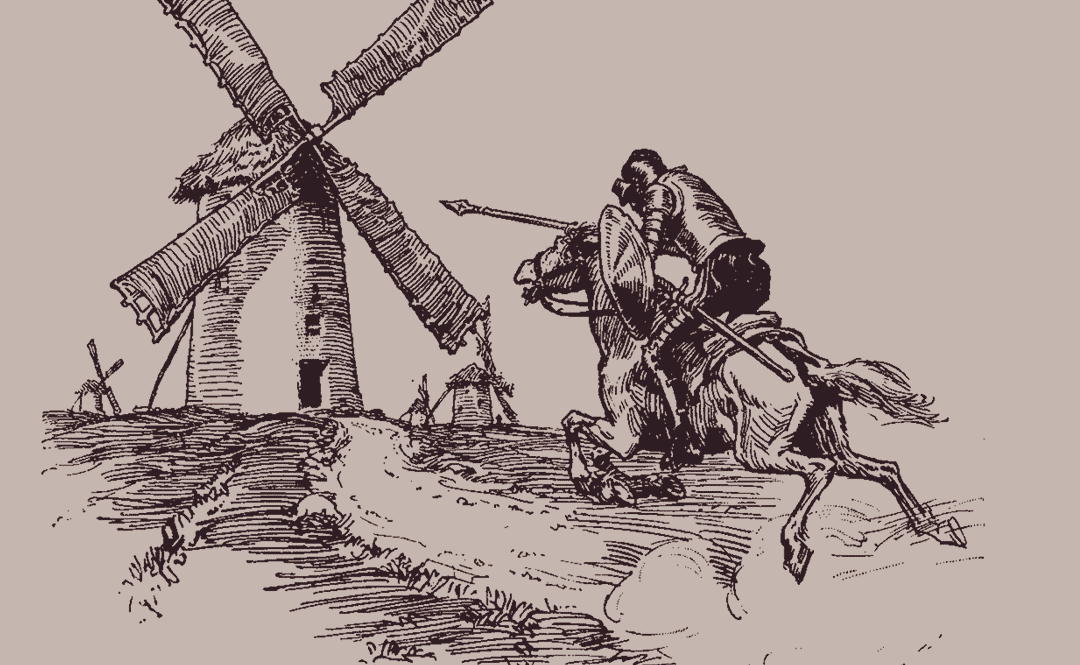

Tilting at Windmills

An announcement on the future of A Modern Stoic

Why do I keep researching and writing on Stoicism and current events, trying to collect and present evidence to both validate my points of view and convince others of their truth? The analysis from GPT below, I believe, explains what is happening. So what does this mean for me?

I think my constant drive to find evidence supporting my positions, along with my desire to promote those positions in blogs, posts, or books, reflects the constant attacks on my positions in the media triggering my defense mechanisms as described in the ChatGPT analysis below. In the end though, am I changing anyone’s views, or just reaffirming my own views through my defense, along with those of the limited audience who read what I write? I think it is almost all the latter.

So what then? I can keep pissing into the wind (or cyberspace) in the vain attempt to influence the views of others (my rationalization for my actions), or I can accept that my views are my own, that I have my own reasons for them based on my (biased) analysis of evidence, and that nothing I say is likely to change anyone else’s mind (the psychological reality) if they disagree with my positions. This does not mean that I think I am wrong; it is just that my attempts to convince others are largely a fool’s errand.

Compounding this is also the reality that I am old, not well, and not likely to be around for much longer to keep tilting against windmills. It is the increasing reminders of this reality in my daily life that has driven this reassessment.

Is the world suddenly going to come to an epiphany and exclaim, “It’s so clear! You are so right! Your words have raised the blinders from my eyes!”—hah! not going to happen and time to slap down my ego for thinking so.

In recognition of this reality, I’m going to be putting my work on this site on hold and will let the domain registration run out (it still has several years to go). I still agree with what I have written over the years and think there is good advice here for those who may need it, so I will keep this site live while the domain remains active. While I don’t anticipate adding new posts, it may happen that the itch is so strong that I will be forced to scratch it, as I have learned to never say never, especially when it comes to my motivations. But for now, my drive for external confirmation has abated, and it’s time to find something to do with my idle hands and mind to keep them occupied. Michel de Montaigne may be my inspiration, just for my own edification.

If you are reading this, thank you for your interest, and I wish you a long and happy life (hint—I believe Stoicism is the key to achieving that!).

ChatGPT analysis on what drives people to defend their views:

When people hold a belief—whether about politics, personal identity, science, or moral values—it becomes woven into their sense of self and social belonging. Challenging that belief is often experienced not as a benign exchange of information but as a threat to one’s identity. The psychological mechanisms that drive resistance to change and even strengthen the original belief under attack are well-documented in social and cognitive psychology, and several empirical studies illustrate these effects.

At the core is motivated reasoning, the process by which people evaluate evidence in a way that supports conclusions they want to arrive at rather than those that are objectively warranted. When confronted with disconfirming evidence, people don’t process it neutrally; instead, they engage in selective acceptance and criticism. They attend to information that confirms their existing belief and discount information that contradicts it. This pattern is not merely a failure of logic but a psychological strategy for maintaining coherence among beliefs, self-image, and group identity.

A seminal set of experiments demonstrating motivated reasoning was conducted by Charles Lord, Lee Ross, and Mark Lepper (1979). Participants who held strong positions on capital punishment were presented with two studies—one ostensibly supporting the deterrent effect of capital punishment and one opposing it. Rather than moving toward a middle ground, proponents and opponents each rated the study consistent with their position as more methodologically sound. Moreover, exposure to both studies increased participants’ confidence in their initial position rather than decreased it. This pattern of biased evaluation exemplifies how people defend their beliefs by discrediting opposing evidence and bolstering confirming evidence*.* (Lord, Ross, & Lepper, 1979)

A related mechanism is cognitive dissonance. When beliefs and new information conflict, it creates an uncomfortable psychological state. To reduce this discomfort, people will change either the belief or the interpretation of the information. In many cases, especially where a belief is tied to identity or group norms, it is easier (psychologically) to reinterpret or dismiss the new information than to revise the belief. Leon Festinger’s theory of cognitive dissonance (1957) predicted this, and later experiments have shown that when people are publicly committed to a position, they are even more likely to harden that position when faced with contradictory evidence rather than abandon it.

One experimental demonstration was done by Elliot Aronson and colleagues, where participants who had freely chosen to advocate for an unpleasant task subsequently increased their positive evaluation of the task. This “effort justification” effect shows how people rationalize commitments and resist admitting they might have been wrong, which generalizes to belief defense more broadly.

A contemporary formulation of these processes is identity-protective cognition, proposed by Dan Kahan and colleagues. When issues are perceived as connected to cultural or group values, people process information in a way that protects their standing within their group. Instead of beliefs changing in light of evidence, individuals shift interpretations to match group-aligned positions, preserving social identity and status. Several studies using risk perceptions (e.g., climate change, gun control) show that individuals with strong cultural identities selectively interpret scientific information to align with their group’s stance and that correcting misperceptions can backfire if it is perceived as an attack on group identity.

Another strand of research underscores that emotional investment and epistemic certainty influence resistance. Beliefs tied to moral foundations or emotional meaning are less susceptible to change because the cost of altering them is psychologically high. Feedback that appears threatening or condescending tends to trigger defensive processing, whereas information framed in a way that affirms autonomy and shared values is more likely to be considered.

Taken together, these findings illustrate that the urge to defend beliefs and the paradoxical strengthening of those beliefs under attack are products of cognitive, motivational, and social dynamics. They are not random errors but systematic patterns of reasoning shaped by the interaction between evidence, identity, and motivation.

Key studies referenced:

Lord, C. G., Ross, L., & Lepper, M. R. (1979). Biased assimilation and attitude polarization: The effects of prior theories on subsequently considered evidence. Journal of Personality and Social Psychology.

Festinger, L. (1957). A Theory of Cognitive Dissonance. Stanford University Press.

Aronson, E. et al. (specific studies on effort justification and dissonance).

Kahan, D. M. (cultural cognition research on risk perception and identity-protective cognition).